This post is definitely not an attempt to join the online crusade against SNAP authority. I am neither going to ask you to sign a petition nor going to encourage you to waste any time crying over it. Rather, we will look at the data and try to prove/disprove the arguments made by aspirants. In the absence of data, everyone has an opinion. Only when the data enters the discussion, we can differentiate between fact and opinion.

Everyone who is reading this article is affected by SNAP in some way. As highlighted in my SNAP 2020 strategy post, I wasn’t very happy with the difficulty of the test. Even on the YouTube live, we had mentioned the fact that this will lead to a tight distribution of scores and every single mark will make a HUGE difference to the final percentile. Also, we received some flak for predicting high cutoffs (45 to 47 for 98 percentile, 43 to 45 for 97 percentile, 38 to 40 for 90 percentile) but our prediction was fair if you look at the actual scores of students. (44.75 score is 98.56 percentile, 42 is 96.36 percentile, and 38.5 is 91.15 percentile).

If you have a minute, fill this form here where I am capturing scores and percentile.

Analysis based on the data collected (updated on Jan 23, 5 pm)

Sample Size: 80

30 people out of 80 have taken both 20th December and 9th January attempt. 13 of these scored more in the second attempt compared to their first attempt and the remaining 17 saw a drop in their scores. After the test and the result, pretty much everyone I spoke with said that the third slot was more difficult than the first slot. But the data contains people who have seen a jump in their score. How? As a student remarked:

Sir, I have filled the SNAP survey that you gave, just a comment that I wanted to add, even though both my exam scores were in the same range, it doesn’t mean that the difficulty level was same, as we had prepared more and the performance, in my opinion was better in the second attempt than the first. – Rajvi D, IMS student, 37.5 in the first attempt and 36.25 in the second.

Which means, there is a possibility that the test in slot 3 was indeed more difficult than slot 1, but some students ended up doing better or similar, because of factors such as improved content/prep, better strategy and execution on the test day. These factors are not easily measurable. Does this mean that the candidates who didn’t prepare much in that gap and had similar content for both their attempts, found the test to be more difficult and hence the drop in the score?

Also, look at the number of takers in each of the slots. Based on the data that I have, maximum number of students appeared in the third slot, may be because they wanted more time to prepare and the 6th January slot was very close to XAT and people wanted to check out the 20th December test reviews and plan better.

| Descriptive Statistics | 20th December | 6th January | 9th January |

| Mean | 33.60 | 36.54 | 33.42 |

| Median | 33.75 | 37.00 | 33.75 |

| Mode | 28.75 | 37.75 | 39.75 |

| Standard Deviation | 8.27 | 7.00 | 7.31 |

| Sample Variance | 68.42 | 48.99 | 53.49 |

| Range | 32.25 | 32.50 | 31.50 |

| Minimum | 16.50 | 19.00 | 13.25 |

| Maximum | 48.75 | 51.50 | 44.75 |

| Count | 42 | 27 | 60 |

There is a reason why scores are normalized in non-standardized tests. Standardized tests are administered throughout the world and have statistical tools in place to weed out discrepancies in the level of difficulty or discard questions after a period of time. They also have experimental questions built in the test instrument to gauge how the aspirant pool responds to such questions. Whether the tests are adaptive or not, there are defined benchmarks that one can compare his or her performance against.

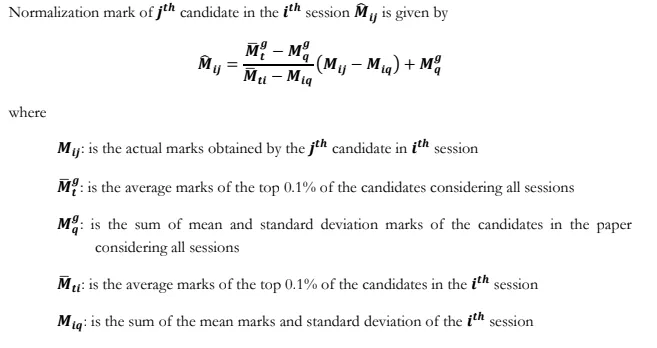

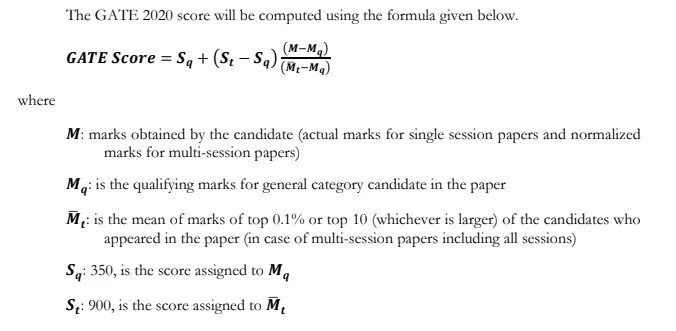

Non-standardized tests don’t have any of that. That’s why the scores are supposed to be normalized. Normalization is no rocket science and possibly a child’s play for any b-school or testing authority. This is how GATE scores are normalized:

If you’re reasonably good in math, you should be able to read through this calculation. Look at how IIT Madras normalized their recently conducted entrance test for one of its courses which was also administered over three slots. Look at the logic used in normalization – normalized to the QP with maximum average (capped to 100, not lower than the original, plus 2 marks in most beneficial manner). This is how you ensure that students are fairly treated and nobody is at a significant loss due to difference in the difficulty of the test.

The Maharashtra CET which is conducted over four slots also has a normalization mechanism. Whether they do it or not is a different question because (1) as there are 200 questions, most students are unable to pin point how many questions they attempted genuinely and how many are correct. Only if someone goes to the test and marks x number of questions with 100% confidence and scores y or x, we will be able to find whether the scores were normalized or not; (2) there is no negative marking and the reported scores are integral values. You can read more about CET normalization here: Equi-Percentile method.

The bottomline is this: SNAP authority should have normalized the scores and used those to calculate percentile or they should have used equipercentile method to arrive at the final percentile. They should have also revealed how many students appeared in each of the slots.

In the same Google form, I asked a question whether students scored in line with their expectation. 87.5% people said ‘No’. This was not the result that you deserved. However, don’t waste your time fighting over it. If something changes from here due to the ongoing efforts from students, great! But there is a chance that nothing will change (Not taking a pessimistic stand here but I’ve seen more test seasons than most of you and know how these things unfold).

Reference: Just in case someone has doubts over the presented analysis, the entire raw data is available here: SNAP 2020 Score Distribution

Wish you guys all the best for the remaining tests of the season. A how to crack CET session is just around the corner. Do comment with your thoughts, share with friends, and don’t forget to subscribe 🙂

Hello sir,

I had doubt regarding type of candidate for the Mh CET exam.

I am a student who has completed my HSC from maharastra (mumbai) but was not born in maharastra ,will I still be considered as a Maharastra state candidate? Also is it mandatory to have a domicile certificate in order to register as a maharastra state candidate?If I don’t will I be considered as a All india candidate.

LikeLike

Hi Aditya. You will need a domicile certificate. Please go through the brochure here: https://drive.google.com/file/d/114sYqeFt6F6f3ZT2W5agv5aLVfMj2pdO/view

Refer to page 5. All the best!

LikeLike

Jbims has only 18 seats and accepts so many exams in all india quota. There’ll be more than 18 students getting 99.99..Then how do they select ?

LikeLike

Hi Utkarsh. There were 22 people with 99.99 or higher in various tests last year. Some of them are Maharashtra candidates and hence will be assigned a Maharashtra seat. The remaining will be assigned AI seat. Check the scores here: https://drive.google.com/file/d/1a-hHcomkDlbJuTxhnVogVQfrzEX9HEVi/view

LikeLike

Pingback: MBA CET 2021 – No Normalization? – Cracking MBA CET

Hello Sir,

I got 84.8 %ile in SNAP and I have around 3.5 years of experience in IT, so dropping is not a very viable option.

Is there any good colleges under Symbiosis from which I could get a call?

Also, what are your thoughts about SIDTM and SIOM? Is SIOM possible with 84.8%? (I am a Software Engineer and want to get into project/product manager roles post MBA).

Thank you

LikeLike

SIOM looks borderline at that score. SIDTM should work out.

LikeLike